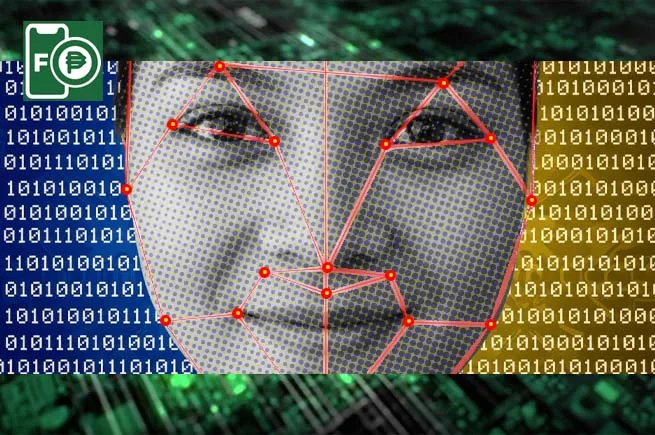

As generative AI accelerates the evolution of cyber threats, identity verification is emerging as a critical frontline in digital security.

In its latest Threat Intelligence Report, iProov flagged a sharp rise in AI-powered deception tactics, with injection attacks targeting iOS devices surging by 1,151% in the second half of 2025, contributing to a 741% increase for the full year.

IMAGE CREDIT: iProov

The report, based on global threat intelligence and real-time monitoring, highlights how cybercriminals are increasingly leveraging generative AI to scale impersonation attacks — targeting systems used for onboarding, authentication, and high-value transactions.

Identity becomes a key battleground

“Identity is becoming the new battleground in cybersecurity,” said Andrew Newell, Chief Scientific Officer at iProov.

He noted that generative AI is enabling attackers to “industrialize digital impersonation,” allowing fraud schemes to move from isolated incidents to repeatable, large-scale operations.

Dr. Andrew Newell, Chief Scientific Officer at iProov

Industry data reinforces this trend. Research from the Ponemon Institute shows that 41% of organizations have experienced deepfake attacks targeting executives, while a separate study by Gartner found that 37% of cybersecurity leaders encountered deepfake incidents during video calls.

Recent cyber incidents involving companies such as Marks & Spencer and Jaguar Land Rover further illustrate how gaps in identity and access management can expose organizations to operational disruption.

iOS emerges as a growing attack surface

The report points to a significant shift in attacker focus toward iOS devices, traditionally perceived as more secure environments.

While injection attacks rose modestly by 14% in the first half of 2025, activity surged dramatically in the latter half of the year.

According to iProov, this signals the mainstreaming of advanced attack techniques — once limited to highly resourced actors — into scalable, repeatable playbooks.

Deepfakes move into everyday business workflows

Beyond device-level attacks, deepfake technology is increasingly being deployed across routine corporate processes, particularly in video-based interactions.

Advances in AI tools have lowered the barrier to creating realistic synthetic identities, enabling attackers to generate convincing impersonations from minimal source material. This expands the threat beyond traditional verification systems into internal communications and approval workflows.

Southeast Asia flagged as emerging testing ground

The report also highlights the growing role of Southeast Asia in the global fraud landscape.

The region recorded a 720% spike in identity-based attacks in the third quarter of 2025, suggesting it is being used as a testing ground for new fraud techniques, including virtual camera exploits and the use of stolen KYC data.

Once validated, these methods are often scaled to other markets, accelerating the global spread of coordinated identity attacks across financial institutions and digital platforms.

Shift toward continuous identity threat detection

IMAGE CREDIT: iProov

As threats evolve, iProov warns that traditional, static approaches to identity verification are no longer sufficient.

Instead, organizations are being pushed to adopt continuous identity threat detection systems that can adapt in real time, supported by updated global standards such as NIST SP 800-63-4 and emerging biometric verification frameworks.

The report draws on data from iProov’s Security Operations Center, incorporating real-time threat detection, dark web monitoring, and biometric research to map the rapidly changing threat landscape.

As AI continues to lower the cost and complexity of launching attacks, the ability to verify not just identity — but genuine human presence —may become a defining factor in securing digital ecosystems.